As we hit the final stretch of the school year and prepare to announce our next Call for Effective Technology (CET) grantee cohort, it’s worth reflecting on what we’ve learned from the first one. What we’re seeing reaffirms much of what we’ve learned from high-dosage tutoring over the past four years, with some twists that are specific to AI.

1. Humans still matter. A lot.

There’s been a lot of hyperbole about AI taking teachers’ jobs. In reality, it’s teachers who determine whether AI plays any role in their classrooms at all. If a teacher doesn’t see the value in a tool, they can put AI out of a job before it ever gets in front of a student. Teacher agency shows up in three ways:

- Whether to assign it. AI platforms capture usage data with a precision tutoring providers often can’t match. The question isn’t whether we know a tool is being used, it’s whether teachers believe the tool solves a problem for them. One literacy grantee earned a 9-or-10 Net Promoter Score from 65% of participating teachers because it generates differentiated decodable texts aligned to what students have already been taught in Tier 1. This solves a real problem.

- For what purpose. Teachers decide how a tool gets used, sometimes in ways the tool wasn’t designed for. One Arizona district deployed a multimodal math tutor across 42 schools — purchased specifically for Tier 2/3 intervention, with teachers instructed not to use it for Tier 1. Teachers used it for Tier 1 anyway, and most of the tool’s usage there now comes from that off-label adoption. A grantee’s implementation design is, in practice, a hypothesis about what teachers will do. Teachers get the final say.

- In what context. Student engagement depends on the environment around the tool. In one implementation, students were too self-conscious to practice speaking into microphones around peers so teachers began playing music quietly in the background to ease the inhibition. In another deployment, schools with a literacy specialist actively supporting participating teachers had meaningfully higher engagement than schools where individual teachers opted in without site-level backing.

If humans are the gatekeepers, designing for them isn’t a concession. It’s the strategy.

2. Precision is what makes AI special.

The capacity to target an individual student’s specific gap (without giving away answers, and while remaining coherent with Tier 1 instruction) is what genuinely distinguishes AI from prior generations of edtech. Across our cohort, precision shows up as decodable texts generated at exactly the phonemic level a student is working on; as math misconception detection that knows which misconception is driving a wrong answer (and therefore doesn’t respond to every wrong answer with the same generic hint); as writing activities embedded directly into the texts students are already reading with differentiated feedback right at the student’s level.

What makes this kind of precision possible is that AI tools can theoretically hold the full Tier 1 scope and sequence, Tier 1 content, prior assessments a student has taken, and a map of common misconceptions in a domain. Even an excellent human tutor may struggle to hold all that in their working memory at once. A well-designed AI tool can know the student, the curriculum, and the misconception landscape at the same time, and respond to the intersection of all three in real time.

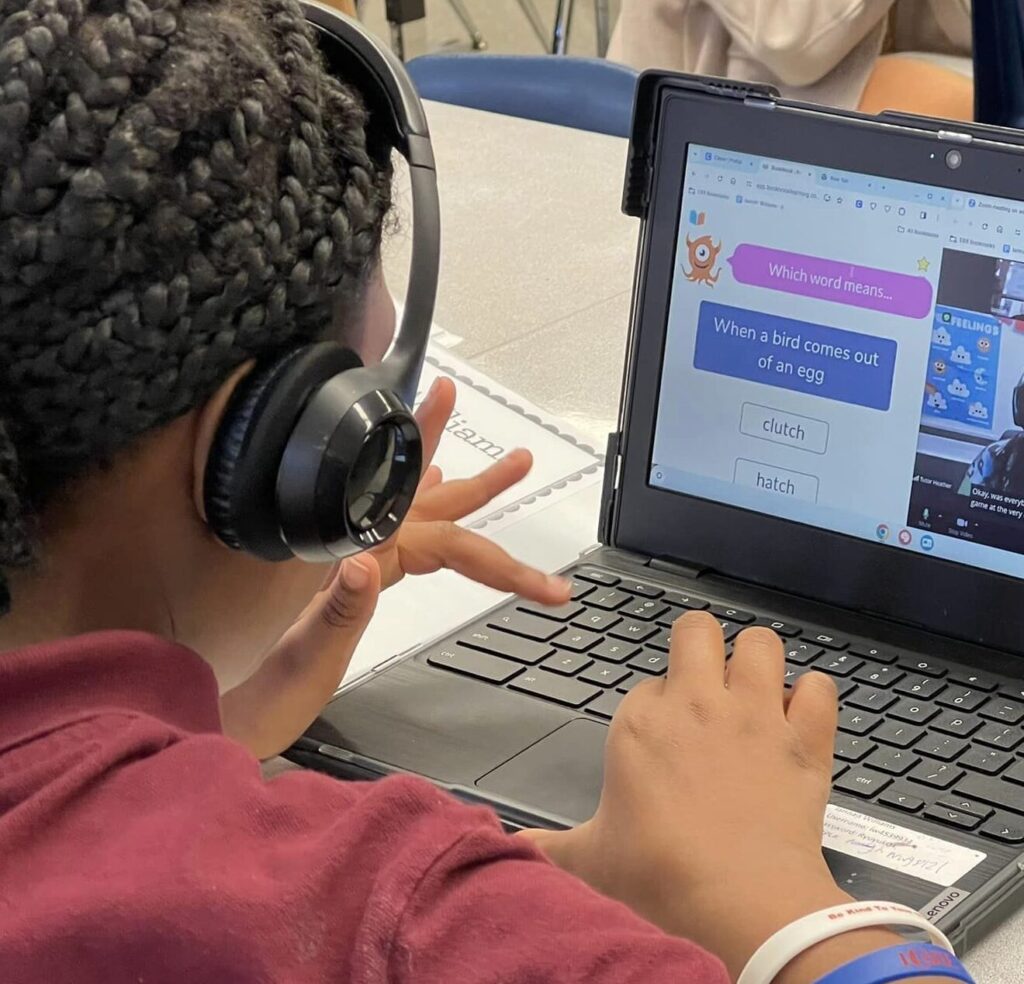

3. Multimodal beats chatbot.

The qualitative feedback in this cohort, for both teachers and students, clusters around tools that let students do something beyond type and read — speaking, drawing, recording, inhabiting an immersive scene. One theory: students who have grown up with interfaces that listen, watch, and respond experience a text-only chatbot as a regression, not a feature. The emerging thread isn’t any single modality winning. It’s that students are being asked to produce something (e.g., handwritten math, a recorded story, a spoken explanation) rather than just respond, or to engage with the tech beyond just typing a response.

4. We have more data than ever, and fewer insights than we need.

AI platforms capture engagement data instantly. That’s a genuine upgrade over tutoring, where implementation fidelity data takes weeks to assemble. But there’s a catch: in tutoring, four years of research has given us reasonable confidence that certain ingredients plus adequate dosage produce learning gains. We know the recipe. In AI, we don’t. We can’t yet point to usage as a reliable indicator of impact, because we don’t yet know which design features at which dosage produce which outcomes. Engagement signals are fast and strong. Learning signals are slower. That gap will narrow with time and more studies. At the moment, the grantees whose tools surface learning insights fastest tend to share a design trait: they’ve built tight instructional loops into the product so learning signal is generated continuously.

Right now, this is what we’re seeing in the field — formal research from our cohort is coming this summer, and our 2026-27 grantees will pick up where this group leaves off.

Jennifer Bronson is the Managing Director of Learning Programs at Accelerate.